MySQL 5.7 基于组复制(MySQL Group Replication) - 运维小结

之前介绍了Mysq主从同步的异步复制(默认模式)、半同步复制、基于GTID复制、基于组提交和并行复制 (解决同步延迟),下面简单说下Mysql基于组复制(MySQL Group Replication) 操作。

一、组复制 (MGR)介绍

MySQL Group Replication(简称MGR)是MySQL官方于2016年12月推出的一个全新的高可用与高扩展的解决方案。组复制是MySQL5.7版本出现的新特性,它提供了高可用、高扩展、高可靠的MySQL集群服务。MySQL组复制分单主模式和多主模式,mysql 的复制技术仅解决了数据同步的问题,如果 master 宕机,意味着数据库管理员需要介入,应用系统可能需要修改数据库连接地址或者重启才能实现。(这里也可以使用数据库中间件产品来避免应用系统数据库连接的问题,例如 mycat 和 atlas 等产品)。组复制在数据库层面上做到了,只要集群中大多数主机可用,则服务可用,也就是说3台服务器的集群,允许其中1台宕机。

1.1 组复制的两种模式

- 在单主模式下, 组复制具有自动选主功能,每次只有一个 server成员接受更新;

- 在多主模式下, 所有的 server 成员都可以同时接受更新;

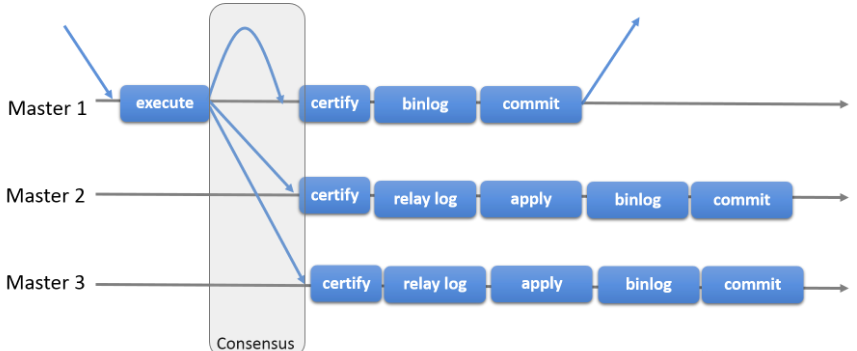

1.2 组复制原理

组复制是一种可用于实现容错系统的技术。 复制组是一个通过消息传递相互交互的server集群。通信层提供了原子消息(atomic message)和完全有序信息交互等保障机制,实现了基于复制协议的多主更新。复制组由多个 server成员构成,并且组中的每个server 成员可以独立地执行事务。但所有读写(RW)事务只有在冲突检测成功后才会提交。只读(RO)事务不需要在冲突检测,可以立即提交。句话说, 对于任何 RW 事务,提交操作并不是由始发 server 单向决定的,而是由组来决定是否提交。准确地说,在始发 server 上,当事务准备好提交时,该 server 会广播写入值(已改变的行)和对应的写入集(已更新的行的唯一标识符)。然后会为该事务建立一个全局的顺序。最终,这意味着所有 server 成员以相同的顺序接收同一组事务。因此, 所有 server 成员以相同的顺序应用相同的更改,以确保组内一致。

基于组的复制(Group-basedReplication)是一种被使用在容错系统中的技术。Replication-group(复制组)是由能够相互通信的多个服务器(节点)组成的。在通信层,Groupreplication实现了一系列的机制:比如原子消息(atomicmessage delivery)和全序化消息(totalorderingof messages)。这些原子化,抽象化的机制,为实现更先进的数据库复制方案提供了强有力的支持。MySQL Group Replication正是基于这些技术和概念,实现了一种多主全更新的复制协议。

简而言之,一个Replication-group就是一组节点,每个节点都可以独立执行事务,而读写事务则会在于group内的其他节点进行协调之后再commit。因此,当一个事务准备提交时,会自动在group内进行原子性的广播,告知其他节点变更了什么内容/执行了什么事务。

这种原子广播的方式,使得这个事务在每一个节点上都保持着同样顺序。这意味着每一个节点都以同样的顺序,接收到了同样的事务日志,所以每一个节点以同样的顺序重演了这些事务日志,最终整个group保持了完全一致的状态。然而,不同的节点上执行的事务之间有可能存在资源争用。这种现象容易出现在两个不同的并发事务上。

假设在不同的节点上有两个并发事务,更新了同一行数据,那么就会发生资源争用。面对这种情况,GroupReplication判定先提交的事务为有效事务,会在整个group里面重演,后提交的事务会直接中断,或者回滚,最后丢弃掉。因此,这也是一个无共享的复制方案,每一个节点都保存了完整的数据副本。看下图描述了具体的工作流程,能够简洁的和其他方案进行对比。这个复制方案,在某种程度上,和数据库状态机(DBSM)的Replication方法比较类似。

MySQL组复制协议工作流程:

需要注意:MySQL组复制是一种 share-nothing 复制方案,其中每个 server 成员都有自己的完整数据副本。

1.3 组复制特点

- 高一致性

基于原生复制及 paxos 协议的组复制技术,并以插件的方式提供,提供一致数据安全保证。确保组内数据最终一致性【重要】(通过分布式协议和分布式recovery机制保证);

- 高容错性

确保组内高可用。只要不是大多数节点坏掉就可以继续工作,有自动检测机制,当不同节点产生资源争用冲突时,不会出现错误,按照先到者优先原则进行处理,并且内置了自动化脑裂防护机制;

- 高扩展性

良好的扩展能力,可动态增删节点,组成员自动管理。节点的新增和移除都是自动的,新节点加入后,会自动从其他节点上同步状态,直到新节点和其他节点保持一致,如果某节点被移除了,其他节点自动更新组信息,自动维护新的组信息;

- 高灵活性

有单主模式和多主模式,单主模式下,会自动选主,所有更新操作都在主上进行;

多主模式下,所有 server 都可以同时处理更新操作。

- 多写,写冲突检测;

1.4 组复制故障检测

故障检测是提供关于哪些 server 可能已死的信息(猜测)的分布式服务。 某个 server 无响应时触发猜测,组中其余成员进行协调决定以排除给定成员。如果某个 server 与组的其余成员隔离,则它会怀疑所有其他 server 都失败了。由于无法与组达成协议(因为它无法确保仲裁成员数),其怀疑不会产生后果。当服务器以此方式与组隔离时,它无法执行任何本地事务。 在线 server 列表通常称为视图,新成员server的加入离开,无论是自愿还是被迫的离开,该组都会动态地重新规划其配置,并触发视图更新。

1.5 组复制的限制

- 存储引擎必须为Innodb,即仅支持InnoDB表,并且每张表一定要有一个主键,用于做write set的冲突检测;

- 每个表必须提供主键;

- 只支持ipv4,网络需求较高;

- 必须打开GTID特性,二进制日志格式必须设置为ROW,用于选主与write set;

- COMMIT可能会导致失败,类似于快照事务隔离级别的失败场景;

- 目前一个MGR集群组最多支持9个节点;

- 不支持外键于save point特性,无法做全局间的约束检测与部分部分回滚;

- 二进制日志binlog不支持Replication event checksums;

- 多主模式(也就是多写模式) 不支持SERIALIZABLE事务隔离级别;

- 多主模式不能完全支持级联外键约束;

- 多主模式不支持在不同节点上对同一个数据库对象并发执行DDL(在不同节点上对同一行并发进行RW事务,后发起的事务会失败);

二、组复制技术实现

2.1 组复制与传统复制的区别和大幅改进

传统复制

主-从复制: 有一个主和不等数量的从。主节点执行的事务会异步发送给从节点,在从节点重新执行。(异步和半同步;半同步相对异步Master会确认Slave是否接到数据,更加安全)

并行复制: 复制->广播->正式复制

组复制相比传统复制的优势在于:

- 弹性复制(高扩展性): server动态添加移除;

- 高可用分片(高扩展性): 分片实现写扩展,每个分片是一个复制组;

- 替代主从复制(高扩展性): 整组写入,避免单点争用;

- 自动化系统: 自动化部署Mysql复制到已有复制协议的自动化系统;

- 故障检测与容错: 自动检测,若服务faild,组内成员大多数达成认为该服务已不正常,则自动隔离;

在MySQL组复制环境中,组内成员会构成一个视图,组内成员主动加入或离开(主动或被动),都会更新组配置,更新视图。成员自愿离开,先更新组配置,然后采用大多数成员(不包含主动脱离的成员)意见是否确认该成员离开更新视图。如果是故障要排除,则需大多数服务确认(包括故障成员意见),然后才会更新组配置和视图。

特别注意:组复制最大允许即时故障数:f=(n-1)/2,多数正常则正常

2.2 组复制优点小结

1) 在master-slave之间实现了强一致性;

对于只读事务,组间实例无需进行通讯,就可以处理事务;对于读写(RW)事务,组内所有节点必须经过通讯,共同决定事务提交与否。

2) 事务冲突处理

在高并发的多写模式下,节点间事务的提交可能会产生冲突,比如,两个不同的事务在两个节点上操作了同一行数据,这个时候就会产生冲突。首先,Group Replication(GR)能够识别到这个冲突,然后对此的处理是,依赖事务提交的时间先后顺序,先发起提交的节点能够正确提交,而后面的提交,会失败

3) 故障检测

MGR自带故障检测机制,可以识别组内成员是否挂掉(组内节点心跳检测)。当一个节点失效,将由其他节点决定是否将这个失效的节点从group里面剔除。

4) 组成员管理

MGR需要维护组内节点的状态(ONLINE,RECOVERING,OFFLINE),对于失效的节点,由其他节点决定是否剔除。对于新加入的节点,需要维护它的视图与其他节点的视图保持一致。

5) 容错能力

MGR基于分布式一致性算法实现,一个组允许部分节点挂掉,只要保证大多数节点仍然存活并且之间的通讯是没有问题的,那么这个组对外仍然能够提供服务!假设一个MGR由2n+1个节点,那么允许n个节点失效,这个MGR仍然能够对外提供服务。比如有3个节点组成的一个GR,可允许1个节点失效,这个GR仍然能够提供服务。

6) 部署方便简单。

7) 最后结论

对比之前的5.6的双主模式,5.7的组复制模式不管从部署还是管理都要方便很多。

2.3 组复制模式介绍

MGR提供了single-primary和multi-primary两种模式。其中,single-primary mode(单写模式) 组内只有一个节点负责写入,读可以从任意一个节点读取,组内数据保持最终一致;multi-primary mode(多写模式),即写会下发到组内所有节点,组内所有节点同时可读,也是能够保证组内数据最终一致性。尤其要注意:一个MGR的所有节点必须配置使用同一种模式,不可混用!

1) 单写模式

单写模式group内只有一台节点可写可读,其他节点只可以读。

对于group的部署,需要先跑起primary节点(即那个可写可读的节点,read_only = 0)然后再跑起其他的节点,并把这些节点一一加进group。其他的节点就会自动同步primary节点上面的变化,然后将自己设置为只读模式(read_only = 1)。当primary节点意外宕机或者下线,在满足大多数节点存活的情况下,group内部发起选举,选出下一个可用的读节点,提升为primary节点。primary选举根据group内剩下存活节点的UUID按字典序升序来选择,即剩余存活的节点按UUID字典序排列,然后选择排在最前的节点作为新的primary节点。

单写模式部署(单机多实例)

在一个节点上运行三个MySQL实例,然后把其中一个实例部署为主,其他两个节点部署为从;主写,从读;这种模式适用于实验和自己练习。

特别重要:在切换primary期间,mysql group不会处理应用重连接到新的主,这需要应用层自己或者由另外的中间件层(proxy or router)去保证!

2) 多写模式

group内的所有机器都是primary节点,同时可以进行读写操作,并且数据是最终一致的。

该模式不好的地方在于: 非rpm包安装,目前使用rpm方式没有配置成功;启动还是处于手动方式,可以编写sys V方式启动脚本;性能上面没有做压测。

多机单写部署

在三个节点上分别部署MySQL实例,然后把其中一个实例部署为主节点,其他两个节点部署为从节点;主写,从读; 当主节点OFFLINE(下线)时,两个从节点会选举出一个注节点,但是在应用中的连接IP是不会随着更换的,需要重新进行配置。这种模式在节点故障率比较高的场景不适用,会导致应用找不到数据库。

多机多写部署

在三个节点上分别部署MySQL实例,每个节点都接收写请求;额外可以加入一个节点,测试节点的动态增加。

三、基于GTID的组复制分布式集群的环境部署记录

需要清楚知道:MySQL复制组能够以一种自动优先选择的单主模式运行,在某个时间只有一个服务器接受更新 。但是对于更高优先级的用户,组能够以多主模式部署,所有的服务器都能够接受更新,即使它们是同时发生的。组复制中存在着一种内建的组成员关系服务用来保持组的视图一致,并且在任意时间对于组中的所有的服务器都可用。MySQL服务器能够退出或者加入组中,而且视图也会相应的更新。有时服务器可能会意外的退出组(故障),在这种情况下失败检测机制检测这种情况并且告知复制组视图发生了变化,这所有的一切都是自动实现的。

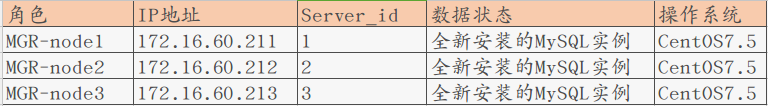

3.1 实验环境

[root@MGR-node1 ~]# cat /etc/redhat-release CentOS Linux release 7.5.1804 (Core) 为了方便实验,关闭所有节点的防火墙 [root@MGR-node1 ~]# systemctl stop firewalld [root@MGR-node1 ~]# firewall-cmd --state not running [root@MGR-node1 ~]# cat /etc/sysconfig/selinux |grep "SELINUX=disabled" SELINUX=disabled [root@MGR-node1 ~]# setenforce 0 setenforce: SELinux is disabled [root@MGR-node1 ~]# getenforce Disabled 特别要注意一个关键点: 必须保证各个mysql节点的主机名不一致,并且能通过主机名找到各成员! 则必须要在每个节点的/etc/hosts里面做主机名绑定,否则后续将节点加入group组会失败!报错RECOVERING!! [root@MGR-node1 ~]# cat /etc/hosts ........ 172.16.60.211 MGR-node1 172.16.60.212 MGR-node2 172.16.60.213 MGR-node3

3.2 安装Mysql5.7

在三个mysql节点机上使用yum方式安装Mysql5.7,参考:https://www.cnblogs.com/kevingrace/p/8340690.html

安装MySQL yum资源库

[root@MGR-node1 ~]# yum localinstall https://dev.mysql.com/get/mysql57-community-release-el7-8.noarch.rpm

安装MySQL 5.7

[root@MGR-node1 ~]# yum install -y mysql-community-server

启动MySQL服务器和MySQL的自动启动

[root@MGR-node1 ~]# systemctl start mysqld.service

[root@MGR-node1 ~]# systemctl enable mysqld.service

设置登录密码

由于MySQL从5.7开始不允许首次安装后使用空密码进行登录!为了加强安全性,系统会随机生成一个密码以供管理员首次登录使用,

这个密码记录在/var/log/mysqld.log文件中,使用下面的命令可以查看此密码:

[root@MGR-node1 ~]# cat /var/log/mysqld.log|grep 'A temporary password'

2019-01-11T05:53:17.824073Z 1 [Note] A temporary password is generated for root@localhost: TaN.k:*Qw2xs

使用上面查看的密码TaN.k:*Qw2xs 登录mysql,并重置密码为123456

[root@MGR-node1 ~]# mysql -p #输入默认的密码:TaN.k:*Qw2xs

.............

mysql> set global validate_password_policy=0;

Query OK, 0 rows affected (0.00 sec)

mysql> set global validate_password_length=1;

Query OK, 0 rows affected (0.00 sec)

mysql> set password=password("123456");

Query OK, 0 rows affected, 1 warning (0.00 sec)

mysql> flush privileges;

Query OK, 0 rows affected (0.00 sec)

查看mysql版本

[root@MGR-node1 ~]# mysql -p123456

........

mysql> select version();

+-----------+

| version() |

+-----------+

| 5.7.24 |

+-----------+

1 row in set (0.00 sec)

=====================================================================

温馨提示

mysql5.7通过上面默认安装后,执行语句可能会报错:

ERROR 1819 (HY000): Your password does not satisfy the current policy requirements

这个报错与Mysql 密码安全策略validate_password_policy的值有关,validate_password_policy可以取0、1、2三个值:

解决办法:

set global validate_password_policy=0;

set global validate_password_length=1;

3.3 MGR组复制配置 (本案例采用MGR多写模式)

第一个节点MGR-node1的配置

先给这组MGR起个组名,组名可以随便起,但是不能使用主机的GTID!

通过节点的uuid作为loose-group_replication_group_name的组名,并且每个节点的这个组名必须一样!

这里使用MGR-node1节点mysql里的uuid作为组名

mysql> select uuid();

+--------------------------------------+

| uuid() |

+--------------------------------------+

| 5db40c3c-180c-11e9-afbf-005056ac6820 |

+--------------------------------------+

1 row in set (0.00 sec)

[root@MGR-node1 ~]# cp /etc/my.cnf /etc/my.cnf.bak

[root@MGR-node1 ~]# >/etc/my.cnf

[root@MGR-node1 ~]# vim /etc/my.cnf

[mysqld]

datadir = /var/lib/mysql

socket = /var/lib/mysql/mysql.sock

symbolic-links = 0

log-error = /var/log/mysqld.log

pid-file = /var/run/mysqld/mysqld.pid

#GTID:

server_id = 1

gtid_mode = on

enforce_gtid_consistency = on

master_info_repository=TABLE

relay_log_info_repository=TABLE

binlog_checksum=NONE

#binlog

log_bin = mysql-bin

log-slave-updates = 1

binlog_format = row

sync-master-info = 1

sync_binlog = 1

#relay log

skip_slave_start = 1

transaction_write_set_extraction=XXHASH64 #以便在server收集写集合的同时将其记录到二进制日志。写集合基于每行的主键,并且是行更改后的唯一标识此标识将用于检测冲突。

loose-group_replication_group_name="5db40c3c-180c-11e9-afbf-005056ac6820" #组的名字可以随便起,但不能用主机的GTID! 所有节点的这个组名必须保持一致!

loose-group_replication_start_on_boot=off #为了避免每次启动自动引导具有相同名称的第二个组,所以设置为OFF。

loose-group_replication_local_address= "172.16.60.211:24901"

loose-group_replication_group_seeds= "172.16.60.211:24901,172.16.60.212:24901,172.16.60.213:24901"

loose-group_replication_bootstrap_group=off

loose-group_replication_single_primary_mode=off #关闭单主模式的参数(本例测试时多主模式,所以关闭该项)

loose-group_replication_enforce_update_everywhere_checks=on #开启多主模式的参数

loose-group_replication_ip_whitelist="172.16.60.0/24,127.0.0.1/8" # 允许加入组复制的客户机来源的ip白名单

===============================================================================

特别提示:

把MGR-node1的 /etc/my.cnf 文件配置好后,直接拷贝给另外的两个节点MGR-node2、MGR-node3

然后再在其他两个节点上修改server_id和loose-group_replication_local_address即可,其他都不动!

[root@MGR-node1 ~]# rsync -e "ssh -p22" -avpgolr /etc/my.cnf root@172.16.60.212:/etc/

[root@MGR-node1 ~]# rsync -e "ssh -p22" -avpgolr /etc/my.cnf root@172.16.60.213:/etc/

===============================================================================

配置完成后,要记得重启mysqld服务 (重启之前,先情况mysqld服务日志)

[root@MGR-node1 ~]# >/var/log/mysqld.log

[root@MGR-node1 ~]# systemctl restart mysqld

查看mysql日志

[root@MGR-node1 ~]# grep password /var/log/mysqld.log

2019-01-14T15:19:02.847387Z 0 [Note] Shutting down plugin 'validate_password'

2019-01-14T15:19:04.121045Z 0 [Note] Shutting down plugin 'sha256_password'

2019-01-14T15:19:04.121048Z 0 [Note] Shutting down plugin 'mysql_native_password'

登录mysql进行相关设置操作

[root@MGR-node1 ~]# mysql -p123456

............

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mysql |

| performance_schema |

| sys |

+--------------------+

4 rows in set (0.00 sec)

mysql> SET SQL_LOG_BIN=0; #即不记录二进制日志

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT REPLICATION SLAVE ON *.* TO rpl_slave@'%' IDENTIFIED BY 'slave@123';

Query OK, 0 rows affected, 1 warning (0.00 sec)

mysql> FLUSH PRIVILEGES;

Query OK, 0 rows affected (0.00 sec)

mysql> reset master;

Query OK, 0 rows affected (0.11 sec)

mysql> SET SQL_LOG_BIN=1;

Query OK, 0 rows affected (0.00 sec)

mysql> CHANGE MASTER TO MASTER_USER='rpl_slave', MASTER_PASSWORD='slave@123' FOR CHANNEL 'group_replication_recovery';

Query OK, 0 rows affected, 2 warnings (0.29 sec)

mysql> INSTALL PLUGIN group_replication SONAME 'group_replication.so'; #安装复制组插件

Query OK, 0 rows affected (0.04 sec)

mysql> SHOW PLUGINS;

+----------------------------+----------+--------------------+----------------------+---------+

| Name | Status | Type | Library | License |

+----------------------------+----------+--------------------+----------------------+---------+

| binlog | ACTIVE | STORAGE ENGINE | NULL | GPL |

| mysql_native_password | ACTIVE | AUTHENTICATION | NULL | GPL |

| sha256_password | ACTIVE | AUTHENTICATION | NULL | GPL |

| CSV | ACTIVE | STORAGE ENGINE | NULL | GPL |

| MEMORY | ACTIVE | STORAGE ENGINE | NULL | GPL |

| InnoDB | ACTIVE | STORAGE ENGINE | NULL | GPL |

| INNODB_TRX | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_LOCKS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_LOCK_WAITS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP_RESET | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMPMEM | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMPMEM_RESET | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP_PER_INDEX | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP_PER_INDEX_RESET | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_BUFFER_PAGE | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_BUFFER_PAGE_LRU | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_BUFFER_POOL_STATS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_TEMP_TABLE_INFO | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_METRICS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_DEFAULT_STOPWORD | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_DELETED | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_BEING_DELETED | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_CONFIG | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_INDEX_CACHE | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_INDEX_TABLE | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_TABLES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_TABLESTATS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_INDEXES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_COLUMNS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_FIELDS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_FOREIGN | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_FOREIGN_COLS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_TABLESPACES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_DATAFILES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_VIRTUAL | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| MyISAM | ACTIVE | STORAGE ENGINE | NULL | GPL |

| MRG_MYISAM | ACTIVE | STORAGE ENGINE | NULL | GPL |

| PERFORMANCE_SCHEMA | ACTIVE | STORAGE ENGINE | NULL | GPL |

| ARCHIVE | ACTIVE | STORAGE ENGINE | NULL | GPL |

| BLACKHOLE | ACTIVE | STORAGE ENGINE | NULL | GPL |

| FEDERATED | DISABLED | STORAGE ENGINE | NULL | GPL |

| partition | ACTIVE | STORAGE ENGINE | NULL | GPL |

| ngram | ACTIVE | FTPARSER | NULL | GPL |

| rpl_semi_sync_master | ACTIVE | REPLICATION | semisync_master.so | GPL |

| validate_password | ACTIVE | VALIDATE PASSWORD | validate_password.so | GPL |

| group_replication | ACTIVE | GROUP REPLICATION | group_replication.so | GPL |

+----------------------------+----------+--------------------+----------------------+---------+

47 rows in set (0.00 sec)

mysql> SET GLOBAL group_replication_bootstrap_group=ON; #只在第一个节点执行这个步骤

Query OK, 0 rows affected (0.00 sec)

mysql> START GROUP_REPLICATION;

ERROR 3096 (HY000): The START GROUP_REPLICATION command failed as there was an error when initializing the group communication layer.

如上出现如上错误,则需要先stop之后做才可以

mysql> STOP GROUP_REPLICATION;

Query OK, 0 rows affected (0.00 sec)

mysql> SET GLOBAL group_replication_bootstrap_group=ON;

Query OK, 0 rows affected (0.00 sec)

mysql> START GROUP_REPLICATION;

Query OK, 0 rows affected (2.14 sec)

mysql> SET GLOBAL group_replication_bootstrap_group=OFF;

Query OK, 0 rows affected (0.00 sec)

mysql> SELECT * FROM performance_schema.replication_group_members;

+---------------------------+--------------------------------------+-------------+-------------+--------------+

| CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE |

+---------------------------+--------------------------------------+-------------+-------------+--------------+

| group_replication_applier | 317e2aad-1565-11e9-9c2e-005056ac6820 | MGR-node1 | 3306 | ONLINE |

+---------------------------+--------------------------------------+-------------+-------------+--------------+

1 row in set (0.00 sec)

比如要保证上面的group_replication_applier的状态为"ONLINE"才对!

创建一个测试库

mysql> CREATE DATABASE kevin CHARACTER SET utf8 COLLATE utf8_general_ci;

Query OK, 1 row affected (0.03 sec)

mysql> use kevin;

Database changed

mysql> create table if not exists haha (id int(10) PRIMARY KEY AUTO_INCREMENT,name varchar(50) NOT NULL);

Query OK, 0 rows affected (0.24 sec)

mysql> insert into kevin.haha values(1,"wangshibo"),(2,"guohuihui"),(3,"yangyang"),(4,"shikui");

Query OK, 4 rows affected (0.07 sec)

Records: 4 Duplicates: 0 Warnings: 0

mysql> select * from kevin.haha;

+----+-----------+

| id | name |

+----+-----------+

| 1 | wangshibo |

| 2 | guohuihui |

| 3 | yangyang |

| 4 | shikui |

+----+-----------+

4 rows in set (0.00 sec)

第二个节点MGR-node2的配置

配置MGR-node2节点的my.cnf文件

只需要修改 server_id 和 loose-group_replication_local_address的配置即可 (和MGR-node1的my.cnf文件配置相比较)

[root@MGR-node2 ~]# cat /etc/my.cnf

[mysqld]

datadir = /var/lib/mysql

socket = /var/lib/mysql/mysql.sock

symbolic-links = 0

log-error = /var/log/mysqld.log

pid-file = /var/run/mysqld/mysqld.pid

#GTID:

server_id = 2

gtid_mode = on

enforce_gtid_consistency = on

master_info_repository=TABLE

relay_log_info_repository=TABLE

binlog_checksum=NONE

#binlog

log_bin = mysql-bin

log-slave-updates = 1

binlog_format = row

sync-master-info = 1

sync_binlog = 1

#relay log

skip_slave_start = 1

transaction_write_set_extraction=XXHASH64

loose-group_replication_group_name="5db40c3c-180c-11e9-afbf-005056ac6820"

loose-group_replication_start_on_boot=off

loose-group_replication_local_address= "172.16.60.212:24901"

loose-group_replication_group_seeds= "172.16.60.211:24901,172.16.60.212:24901,172.16.60.213:24901"

loose-group_replication_bootstrap_group=off

loose-group_replication_single_primary_mode=off

loose-group_replication_enforce_update_everywhere_checks=on

loose-group_replication_ip_whitelist="172.16.60.0/24,127.0.0.1/8"

配置完成后,要记得重启mysqld服务 (重启之前,先情况mysqld服务日志)

[root@MGR-node2 ~]# >/var/log/mysqld.log

[root@MGR-node2 ~]# systemctl restart mysqld

查看mysql日志

[root@MGR-node2 ~]# grep password /var/log/mysqld.log

2019-01-14T15:53:14.293490Z 0 [Note] Shutting down plugin 'validate_password'

2019-01-14T15:53:16.144077Z 0 [Note] Shutting down plugin 'sha256_password'

2019-01-14T15:53:16.144080Z 0 [Note] Shutting down plugin 'mysql_native_password'

登录mysql进行相关设置操作

[root@MGR-node2 ~]# mysql -p123456

.........

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mysql |

| performance_schema |

| sys |

+--------------------+

4 rows in set (0.00 sec)

mysql> SET SQL_LOG_BIN=0;

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT REPLICATION SLAVE ON *.* TO rpl_slave@'%' IDENTIFIED BY 'slave@123';

Query OK, 0 rows affected, 1 warning (0.00 sec)

mysql> FLUSH PRIVILEGES;

Query OK, 0 rows affected (0.00 sec)

mysql> reset master;

Query OK, 0 rows affected (0.10 sec)

mysql> SET SQL_LOG_BIN=1;

Query OK, 0 rows affected (0.00 sec)

mysql> CHANGE MASTER TO MASTER_USER='rpl_slave', MASTER_PASSWORD='slave@123' FOR CHANNEL 'group_replication_recovery';

Query OK, 0 rows affected, 2 warnings (0.21 sec)

mysql> INSTALL PLUGIN group_replication SONAME 'group_replication.so';

Query OK, 0 rows affected (0.04 sec)

mysql> SHOW PLUGINS;

+----------------------------+----------+--------------------+----------------------+---------+

| Name | Status | Type | Library | License |

+----------------------------+----------+--------------------+----------------------+---------+

| binlog | ACTIVE | STORAGE ENGINE | NULL | GPL |

| mysql_native_password | ACTIVE | AUTHENTICATION | NULL | GPL |

| sha256_password | ACTIVE | AUTHENTICATION | NULL | GPL |

| CSV | ACTIVE | STORAGE ENGINE | NULL | GPL |

| MEMORY | ACTIVE | STORAGE ENGINE | NULL | GPL |

| InnoDB | ACTIVE | STORAGE ENGINE | NULL | GPL |

| INNODB_TRX | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_LOCKS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_LOCK_WAITS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP_RESET | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMPMEM | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMPMEM_RESET | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP_PER_INDEX | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP_PER_INDEX_RESET | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_BUFFER_PAGE | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_BUFFER_PAGE_LRU | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_BUFFER_POOL_STATS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_TEMP_TABLE_INFO | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_METRICS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_DEFAULT_STOPWORD | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_DELETED | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_BEING_DELETED | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_CONFIG | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_INDEX_CACHE | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_INDEX_TABLE | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_TABLES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_TABLESTATS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_INDEXES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_COLUMNS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_FIELDS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_FOREIGN | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_FOREIGN_COLS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_TABLESPACES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_DATAFILES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_VIRTUAL | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| MyISAM | ACTIVE | STORAGE ENGINE | NULL | GPL |

| MRG_MYISAM | ACTIVE | STORAGE ENGINE | NULL | GPL |

| PERFORMANCE_SCHEMA | ACTIVE | STORAGE ENGINE | NULL | GPL |

| ARCHIVE | ACTIVE | STORAGE ENGINE | NULL | GPL |

| BLACKHOLE | ACTIVE | STORAGE ENGINE | NULL | GPL |

| FEDERATED | DISABLED | STORAGE ENGINE | NULL | GPL |

| partition | ACTIVE | STORAGE ENGINE | NULL | GPL |

| ngram | ACTIVE | FTPARSER | NULL | GPL |

| rpl_semi_sync_slave | ACTIVE | REPLICATION | semisync_slave.so | GPL |

| validate_password | ACTIVE | VALIDATE PASSWORD | validate_password.so | GPL |

| group_replication | ACTIVE | GROUP REPLICATION | group_replication.so | GPL |

+----------------------------+----------+--------------------+----------------------+---------+

47 rows in set (0.00 sec)

这里只需要执行这一步即可!

mysql> START GROUP_REPLICATION;

Query OK, 0 rows affected (4.58 sec)

查看组内情况,发现MGR-node2已经成功加入这个组内了。

注意:这里一定要将三个节点的/etc/hosts文件里绑定主机名,否则这里添加组的时候会一直报错:RECOVERING (必须要是ONLINE才行)

mysql> SELECT * FROM performance_schema.replication_group_members;

+---------------------------+--------------------------------------+-------------+-------------+--------------+

| CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE |

+---------------------------+--------------------------------------+-------------+-------------+--------------+

| group_replication_applier | 2c1efc46-1565-11e9-ab8e-00505688047c | MGR-node2 | 3306 | ONLINE |

| group_replication_applier | 317e2aad-1565-11e9-9c2e-005056ac6820 | MGR-node1 | 3306 | ONLINE |

+---------------------------+--------------------------------------+-------------+-------------+--------------+

2 rows in set (0.00 sec)

查看下,发现已经将MGR-node1节点添加的数据同步过来了

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| kevin |

| mysql |

| performance_schema |

| sys |

+--------------------+

5 rows in set (0.00 sec)

mysql> select * from kevin.haha;

+----+-----------+

| id | name |

+----+-----------+

| 1 | wangshibo |

| 2 | guohuihui |

| 3 | yangyang |

| 4 | shikui |

+----+-----------+

4 rows in set (0.00 sec)

第三个节点MGR-node3的配置

配置MGR-node3节点的my.cnf文件

只需要修改 server_id 和 loose-group_replication_local_address的配置即可 (和MGR-node1的my.cnf文件配置相比较)

[root@MGR-node3 ~]# vim /etc/my.cnf

[mysqld]

datadir = /var/lib/mysql

socket = /var/lib/mysql/mysql.sock

symbolic-links = 0

log-error = /var/log/mysqld.log

pid-file = /var/run/mysqld/mysqld.pid

#GTID:

server_id = 3

gtid_mode = on

enforce_gtid_consistency = on

master_info_repository=TABLE

relay_log_info_repository=TABLE

binlog_checksum=NONE

#binlog

log_bin = mysql-bin

log-slave-updates = 1

binlog_format = row

sync-master-info = 1

sync_binlog = 1

#relay log

skip_slave_start = 1

transaction_write_set_extraction=XXHASH64

loose-group_replication_group_name="5db40c3c-180c-11e9-afbf-005056ac6820"

loose-group_replication_start_on_boot=off

loose-group_replication_local_address= "172.16.60.213:24901"

loose-group_replication_group_seeds= "172.16.60.211:24901,172.16.60.212:24901,172.16.60.213:24901"

loose-group_replication_bootstrap_group=off

loose-group_replication_single_primary_mode=off

loose-group_replication_enforce_update_everywhere_checks=on

loose-group_replication_ip_whitelist="172.16.60.0/24,127.0.0.1/8"

配置完成后,要记得重启mysqld服务 (重启之前,先情况mysqld服务日志)

[root@MGR-node3 ~]# >/var/log/mysqld.log

[root@MGR-node3 ~]# systemctl restart mysqld

查看mysql日志

[root@MGR-node3 ~]# grep password /var/log/mysqld.log

2019-01-14T16:40:15.251663Z 0 [Note] Shutting down plugin 'validate_password'

2019-01-14T16:40:16.997360Z 0 [Note] Shutting down plugin 'sha256_password'

2019-01-14T16:40:16.997363Z 0 [Note] Shutting down plugin 'mysql_native_password'

登录mysql进行相关设置操作

[root@MGR-node3 ~]# mysql -p123456

..........

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mysql |

| performance_schema |

| sys |

+--------------------+

4 rows in set (0.00 sec)

mysql> SET SQL_LOG_BIN=0;

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT REPLICATION SLAVE ON *.* TO rpl_slave@'%' IDENTIFIED BY 'slave@123';

Query OK, 0 rows affected, 1 warning (0.00 sec)

mysql> FLUSH PRIVILEGES;

Query OK, 0 rows affected (0.00 sec)

mysql> reset master;

Query OK, 0 rows affected (0.12 sec)

mysql> SET SQL_LOG_BIN=1;

Query OK, 0 rows affected (0.00 sec)

mysql> CHANGE MASTER TO MASTER_USER='rpl_slave', MASTER_PASSWORD='slave@123' FOR CHANNEL 'group_replication_recovery';

Query OK, 0 rows affected, 2 warnings (0.29 sec)

mysql> INSTALL PLUGIN group_replication SONAME 'group_replication.so';

Query OK, 0 rows affected (0.03 sec)

mysql> SHOW PLUGINS;

+----------------------------+----------+--------------------+----------------------+---------+

| Name | Status | Type | Library | License |

+----------------------------+----------+--------------------+----------------------+---------+

| binlog | ACTIVE | STORAGE ENGINE | NULL | GPL |

| mysql_native_password | ACTIVE | AUTHENTICATION | NULL | GPL |

| sha256_password | ACTIVE | AUTHENTICATION | NULL | GPL |

| CSV | ACTIVE | STORAGE ENGINE | NULL | GPL |

| MEMORY | ACTIVE | STORAGE ENGINE | NULL | GPL |

| InnoDB | ACTIVE | STORAGE ENGINE | NULL | GPL |

| INNODB_TRX | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_LOCKS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_LOCK_WAITS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP_RESET | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMPMEM | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMPMEM_RESET | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP_PER_INDEX | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_CMP_PER_INDEX_RESET | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_BUFFER_PAGE | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_BUFFER_PAGE_LRU | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_BUFFER_POOL_STATS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_TEMP_TABLE_INFO | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_METRICS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_DEFAULT_STOPWORD | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_DELETED | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_BEING_DELETED | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_CONFIG | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_INDEX_CACHE | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_FT_INDEX_TABLE | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_TABLES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_TABLESTATS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_INDEXES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_COLUMNS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_FIELDS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_FOREIGN | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_FOREIGN_COLS | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_TABLESPACES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_DATAFILES | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| INNODB_SYS_VIRTUAL | ACTIVE | INFORMATION SCHEMA | NULL | GPL |

| MyISAM | ACTIVE | STORAGE ENGINE | NULL | GPL |

| MRG_MYISAM | ACTIVE | STORAGE ENGINE | NULL | GPL |

| PERFORMANCE_SCHEMA | ACTIVE | STORAGE ENGINE | NULL | GPL |

| ARCHIVE | ACTIVE | STORAGE ENGINE | NULL | GPL |

| BLACKHOLE | ACTIVE | STORAGE ENGINE | NULL | GPL |

| FEDERATED | DISABLED | STORAGE ENGINE | NULL | GPL |

| partition | ACTIVE | STORAGE ENGINE | NULL | GPL |

| ngram | ACTIVE | FTPARSER | NULL | GPL |

| rpl_semi_sync_slave | ACTIVE | REPLICATION | semisync_slave.so | GPL |

| validate_password | ACTIVE | VALIDATE PASSWORD | validate_password.so | GPL |

| group_replication | ACTIVE | GROUP REPLICATION | group_replication.so | GPL |

+----------------------------+----------+--------------------+----------------------+---------+

47 rows in set (0.00 sec)

这里只需要执行这一步即可!

mysql> START GROUP_REPLICATION;

Query OK, 0 rows affected (3.45 sec)

查看组内情况,发现MGR-node3已经成功加入这个组内了。

注意:这里一定要将三个节点的/etc/hosts文件里绑定主机名,否则这里添加组的时候会一直报错:RECOVERING (必须要是ONLINE才行)

mysql> SELECT * FROM performance_schema.replication_group_members;

+---------------------------+--------------------------------------+-------------+-------------+--------------+

| CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE |

+---------------------------+--------------------------------------+-------------+-------------+--------------+

| group_replication_applier | 2658b203-1565-11e9-9f8b-005056880888 | MGR-node3 | 3306 | ONLINE |

| group_replication_applier | 2c1efc46-1565-11e9-ab8e-00505688047c | MGR-node2 | 3306 | ONLINE |

| group_replication_applier | 317e2aad-1565-11e9-9c2e-005056ac6820 | MGR-node1 | 3306 | ONLINE |

+---------------------------+--------------------------------------+-------------+-------------+--------------+

3 rows in set (0.00 sec)

查看下,发现已经将在其他节点上添加的数据同步过来了

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| kevin |

| mysql |

| performance_schema |

| sys |

+--------------------+

5 rows in set (0.00 sec)

mysql> select * from kevin.haha;

+----+-----------+

| id | name |

+----+-----------+

| 1 | wangshibo |

| 2 | guohuihui |

| 3 | yangyang |

| 4 | shikui |

+----+-----------+

4 rows in set (0.00 sec)

组复制数据同步测试

在任意一个节点上执行 mysql> SELECT * FROM performance_schema.replication_group_members; +---------------------------+--------------------------------------+-------------+-------------+--------------+ | CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ | group_replication_applier | 2658b203-1565-11e9-9f8b-005056880888 | MGR-node3 | 3306 | ONLINE | | group_replication_applier | 2c1efc46-1565-11e9-ab8e-00505688047c | MGR-node2 | 3306 | ONLINE | | group_replication_applier | 317e2aad-1565-11e9-9c2e-005056ac6820 | MGR-node1 | 3306 | ONLINE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ 3 rows in set (0.00 sec) 如上,说明已经在MGR-node1、MGR-node2、MGR-node3 三个节点上成功部署了基于GTID的组复制同步环境。 现在在三个节点中的任意一个上面更新数据,那么其他两个节点的数据库都会将新数据同步过去的! 1)在MGR-node1节点数据库更新数据 mysql> delete from kevin.haha where id>2; Query OK, 2 rows affected (0.14 sec) 接着在MGR-node2、MGR-node3节点数据库查看,发现更新后数据已经同步过来了! mysql> select * from kevin.haha; +----+-----------+ | id | name | +----+-----------+ | 1 | wangshibo | | 2 | guohuihui | +----+-----------+ 2 rows in set (0.00 sec) 2)在MGR-node2节点数据库更新数据 mysql> insert into kevin.haha values(11,"beijing"),(12,"shanghai"),(13,"anhui"); Query OK, 3 rows affected (0.06 sec) Records: 3 Duplicates: 0 Warnings: 0 接着在MGR-node1、MGR-node3节点数据库查看,发现更新后数据已经同步过来了! mysql> select * from kevin.haha; +----+-----------+ | id | name | +----+-----------+ | 1 | wangshibo | | 2 | guohuihui | | 11 | beijing | | 12 | shanghai | | 13 | anhui | +----+-----------+ 5 rows in set (0.00 sec) 3)在MGR-node3节点数据库更新数据 mysql> update kevin.haha set id=100 where name="anhui"; Query OK, 1 row affected (0.16 sec) Rows matched: 1 Changed: 1 Warnings: 0 mysql> delete from kevin.haha where id=12; Query OK, 1 row affected (0.22 sec) 接着在MGR-node1、MGR-node2节点数据库查看,发现更新后数据已经同步过来了! mysql> select * from kevin.haha; +-----+-----------+ | id | name | +-----+-----------+ | 1 | wangshibo | | 2 | guohuihui | | 11 | beijing | | 100 | anhui | +-----+-----------+ 4 rows in set (0.00 sec)

组复制故障测试

当组内的某个节点发生故障时,会自动从将该节点从组内踢出,与其他节点隔离。剩余的节点之间保持主从复制的正常同步关系。当该节点的故障恢复后,只需手动激活组复制即可(即执行"START GROUP_REPLICATION;");

1)比如 MGR-node1的mysql发生故障,比如关闭mysql服务(或者该节点网络故障,其他节点与之通信失败等) [root@MGR-node1 mysql]# systemctl stop mysqld [root@MGR-node1 mysql]# ps -ef|grep mysql root 4662 26047 0 01:02 pts/0 00:00:00 grep --color=auto mysql [root@MGR-node1 mysql]# 在剩余的两个节点中的任意一个查看 mysql> SELECT * FROM performance_schema.replication_group_members; +---------------------------+--------------------------------------+-------------+-------------+--------------+ | CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ | group_replication_applier | 2658b203-1565-11e9-9f8b-005056880888 | MGR-node3 | 3306 | ONLINE | | group_replication_applier | 2c1efc46-1565-11e9-ab8e-00505688047c | MGR-node2 | 3306 | ONLINE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ 2 rows in set (0.00 sec) 如上,在MGR-node1节点的mysql发生故障后,会自动从这个组内踢出,剩余的两个节点的组复制同步关系正常! 在MGR-node3节点更新数据 mysql> update kevin.haha set id=3 where name="anhui"; Query OK, 1 row affected (0.11 sec) Rows matched: 1 Changed: 1 Warnings: 0 接在在另一个节点MGR-node2上查看,发现更新数据已同步过来 mysql> select * from kevin.haha; +----+-----------+ | id | name | +----+-----------+ | 1 | wangshibo | | 2 | guohuihui | | 3 | anhui | | 11 | beijing | +----+-----------+ 4 rows in set (0.00 sec) 2)当MGR-node1节点的mysql服务恢复后,是什么情况呢? [root@MGR-node1 mysql]# systemctl start mysqld [root@MGR-node1 mysql]# ps -ef|grep mysqld mysql 4846 1 0 01:04 ? 00:00:00 /usr/sbin/mysqld --daemonize --pid-file=/var/run/mysqld/mysqld.pid root 4965 26047 0 01:05 pts/0 00:00:00 grep --color=auto mysqld 在剩余的两个节点中的任意一个查看 mysql> SELECT * FROM performance_schema.replication_group_members; +---------------------------+--------------------------------------+-------------+-------------+--------------+ | CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ | group_replication_applier | 2658b203-1565-11e9-9f8b-005056880888 | MGR-node3 | 3306 | ONLINE | | group_replication_applier | 2c1efc46-1565-11e9-ab8e-00505688047c | MGR-node2 | 3306 | ONLINE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ 2 rows in set (0.00 sec) 如上发现,MGR-node1节点恢复后,不会自动添加到组内,需要手动激活下该节点的组复制功能 [root@MGR-node1 mysql]# mysql -p123456 ........... mysql> START GROUP_REPLICATION; Query OK, 0 rows affected (2.89 sec) 再次查看,就会发现MGR-node1节点已经重新添加到组内了 mysql> SELECT * FROM performance_schema.replication_group_members; +---------------------------+--------------------------------------+-------------+-------------+--------------+ | CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ | group_replication_applier | 2658b203-1565-11e9-9f8b-005056880888 | MGR-node3 | 3306 | ONLINE | | group_replication_applier | 2c1efc46-1565-11e9-ab8e-00505688047c | MGR-node2 | 3306 | ONLINE | | group_replication_applier | 317e2aad-1565-11e9-9c2e-005056ac6820 | MGR-node1 | 3306 | ONLINE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ 3 rows in set (0.01 sec) MGR-node1节点恢复后,并重新添加到组内后,其他节点更新的数据也会及时同步过来! mysql> select * from kevin.haha; +----+-----------+ | id | name | +----+-----------+ | 1 | wangshibo | | 2 | guohuihui | | 3 | anhui | | 11 | beijing | +----+-----------+ 4 rows in set (0.00 sec) =========================================================================== 要是三个节点都发生故障的话,在节点的故障都恢复后,需要手动重新做组复制,操作流程如下: 第一个节点 mysql> reset master; mysql> SET SQL_LOG_BIN=1; mysql> CHANGE MASTER TO MASTER_USER='rpl_slave', MASTER_PASSWORD='slave@123' FOR CHANNEL 'group_replication_recovery'; mysql> STOP GROUP_REPLICATION; mysql> SET GLOBAL group_replication_bootstrap_group=ON; mysql> START GROUP_REPLICATION; mysql> SET GLOBAL group_replication_bootstrap_group=OFF; mysql> SELECT * FROM performance_schema.replication_group_members; 第二个节点 mysql> reset master; mysql> SET SQL_LOG_BIN=1; mysql> CHANGE MASTER TO MASTER_USER='rpl_slave', MASTER_PASSWORD='slave@123' FOR CHANNEL 'group_replication_recovery'; mysql> START GROUP_REPLICATION; mysql> SELECT * FROM performance_schema.replication_group_members; 第三个节点 mysql> reset master; mysql> SET SQL_LOG_BIN=1; mysql> CHANGE MASTER TO MASTER_USER='rpl_slave', MASTER_PASSWORD='slave@123' FOR CHANNEL 'group_replication_recovery'; mysql> START GROUP_REPLICATION; mysql> SELECT * FROM performance_schema.replication_group_members;

MGR节点由于网络出现异常导致的错误解决方法

MGR组内本来有三个节点,但是MGR-node3节点突然从复制组内踢出去了。 mysql> SELECT * FROM performance_schema.replication_group_members; +---------------------------+--------------------------------------+-------------+-------------+--------------+ | CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ | group_replication_applier | 3fee1651-1eeb-11e9-a2f2-00505688047c | MGR-node2 | 3306 | ONLINE | | group_replication_applier | 40ecddaf-1eeb-11e9-94bf-005056ac6820 | MGR-node1 | 3306 | ONLINE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ 3 rows in set (0.00 sec) 查看MGR-node3节点日志发现: [root@MGR-node3 ~]# tail -f /var/log/mysqld.log ......... 2019-01-11T11:17:09.890375Z 0 [ERROR] Plugin group_replication reported: 'Member was expelled from the group due to network failures, changing member status to ERROR.' 错误日志写了是由于网络出现异常导致节点被踢出的组复制,试着重新加入群组: mysql> start group_replication; ERROR 3093 (HY000): The START GROUP_REPLICATION command failed since the group is already running. 解决办法:先停掉这个MGR-node3节点的复制重新打开试下 mysql> stop group_replication; Query OK, 0 rows affected (7.18 sec) mysql> start group_replication; Query OK, 0 rows affected (3.32 sec) mysql> SELECT * FROM performance_schema.replication_group_members; +---------------------------+--------------------------------------+-------------+-------------+--------------+ | CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ | group_replication_applier | 340ed31a-1eeb-11e9-8221-005056880888 | MGR-node3 | 3306 | ONLINE | | group_replication_applier | 3fee1651-1eeb-11e9-a2f2-00505688047c | MGR-node2 | 3306 | ONLINE | | group_replication_applier | 40ecddaf-1eeb-11e9-94bf-005056ac6820 | MGR-node1 | 3306 | ONLINE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ 3 rows in set (0.00 sec) MGR-node3节点重新恢复到复制组内,查看该节点的数据,发现数据也会同步过来的。 如果是多个节点是因为这个原因被踢出复制组,则采用上面方法进行恢复,至此所有节点都恢复正常!

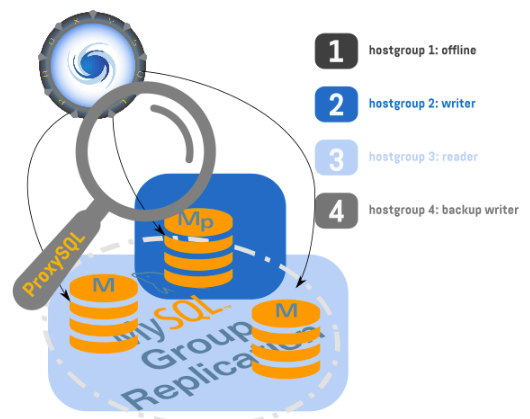

MGR可以使用ProxySQL代理插件,比如三个MGR写节点,一个为当前写节点,另外两个为备份写节点。当前写节点发生故障后,会自会切换到其他正常的备份写节点上。

ProxySQL在MySQL的代理和负载均衡中一直处于领先地位,对MGR提供了很好的原生支持,其中包含了诸如缓存查询,多路复用,流量镜像,读写分离,路由等等的强力功能。在最新的功能性增强中,包含了对MGR的原生支持,不在需要使用第三方脚本进行适配。

最新的增强中,提供了对单写和多写集群组的支持,甚至可以在多写组上指定只由某个成员进行写入操作。

在新版本的ProxySQL中,比如在一个七个节点的多写集群中,指定2组写节点,2组备用写节点,3个只读节点的操作。即ProxySQL虽然识别出来所有的节点皆为写节点,但只路由写操作到选定的两个写节点(通过Hostgroup的方式),同时将另外两个写节点添加到备用写节点组中,最后三个读节点加入读组。(本案例中的组皆为ProxySQL中的hostgroup含义)。除此之外,ProxySQL还可以限制连接访问集群中超出最大设定落后事务值的慢节点(应该是通过mysql_servers.max_replication_lag来控制)。

基于以上部署的MGR节点环境 (即 3 个master节点的多写集群),以下记录ProxySQL + MGR (多写模式) 配置过程:

在172.16.60.211/212/213 三个节点中的任意一个节点的mysql上执行: mysql> SELECT * FROM performance_schema.replication_group_members; +---------------------------+--------------------------------------+-------------+-------------+--------------+ | CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ | group_replication_applier | a2078290-1f25-11e9-b50e-00505688047c | MGR-node2 | 3306 | ONLINE | | group_replication_applier | aaf67b46-1f25-11e9-a9a1-005056ac6820 | MGR-node1 | 3306 | ONLINE | | group_replication_applier | b5df6e60-1f25-11e9-b1bd-005056880888 | MGR-node3 | 3306 | ONLINE | +---------------------------+--------------------------------------+-------------+-------------+--------------+ 3 rows in set (0.00 sec) mysql> select * from kevin.haha; +----+-----------+ | id | name | +----+-----------+ | 1 | wangshibo | | 2 | guohuihui | | 11 | beijing | | 12 | shanghai | +----+-----------+ 4 rows in set (0.00 sec) 可以看出,基于上述部署的MGR环境,3个master节点的多写模式,现在MGR组复制内的三个节点都是ONLINE在线同步状态。

现在追加一台节点172.16.60.220作为ProxySQL代理节点,操作记录如下:

1) 准备工作

[root@mysql-proxy ~]# cat /etc/redhat-release CentOS Linux release 7.5.1804 (Core) [root@mysql-proxy ~]# hostnamectl set-hostname mysql-proxy [root@mysql-proxy ~]# hostname mysql-proxy [root@mysql-proxy ~]# systemctl stop firewalld [root@mysql-proxy ~]# firewall-cmd --state not running [root@mysql-proxy ~]# setenforce 0 [root@mysql-proxy ~]# cat /etc/sysconfig/selinux |grep "SELINUX=disabled" SELINUX=disabled [root@mysql-proxy ~]# hostname -I 172.16.60.220

- 安装mysql客户端,用于在本机连接到ProxySQL的管理接口

[root@mysql-proxy ~]# vim /etc/yum.repos.d/mariadb.repo [mariadb] name = MariaDB baseurl = http://yum.mariadb.org/10.3.5/centos6-amd64 gpgkey=https://yum.mariadb.org/RPM-GPG-KEY-MariaDB gpgcheck=1 安装mysql-clinet客户端 [root@mysql-proxy ~]# yum install -y MariaDB-client ============================================================================ 如果遇到报错: Error: MariaDB-compat conflicts with 1:mariadb-libs-5.5.60-1.el7_5.x86_64 You could try using --skip-broken to work around the problem You could try running: rpm -Va --nofiles --nodigest 解决办法: [root@mysql-proxy ~]# rpm -qa|grep mariadb mariadb-libs-5.5.60-1.el7_5.x86_64 [root@mysql-proxy ~]# rpm -e mariadb-libs-5.5.60-1.el7_5.x86_64 --nodeps [root@mysql-proxy ~]# yum install -y MariaDB-client

- 安装proxysql

proxysql的rpm包下载地址: https://pan.baidu.com/s/1S1_b5DKVCpZSOUNmtCXrrg

提取密码: 5t1c

[root@mysql-proxy ~]# yum install -y perl-DBI perl-DBD-MySQL

[root@mysql-proxy ~]# rpm -ivh proxysql-1.4.8-1-centos7.x86_64.rpm --force

配置文件详解

[root@mysql-proxy ~]# egrep -v "^#|^$" /etc/proxysql.cnf

datadir="/var/lib/proxysql" #数据目录

admin_variables=

{

admin_credentials="admin:admin" #连接管理端的用户名与密码

mysql_ifaces="0.0.0.0:6032" #管理端口,用来连接proxysql的管理数据库!

}

mysql_variables=

{

threads=4 #指定转发端口开启的线程数量

max_connections=2048

default_query_delay=0

default_query_timeout=36000000

have_compress=true

poll_timeout=2000

interfaces="0.0.0.0:6033" #指定转发端口,用于连接后端真实mysql数据库的,相当于代理作用!

default_schema="information_schema"

stacksize=1048576

server_version="5.5.30" #指定后端mysql的版本

connect_timeout_server=3000

monitor_username="monitor"

monitor_password="monitor"

monitor_history=600000

monitor_connect_interval=60000

monitor_ping_interval=10000

monitor_read_only_interval=1500

monitor_read_only_timeout=500

ping_interval_server_msec=120000

ping_timeout_server=500

commands_stats=true

sessions_sort=true

connect_retries_on_failure=10

}

mysql_servers =

(

)

mysql_users:

(

)

mysql_query_rules:

(

)

scheduler=

(

)

mysql_replication_hostgroups=

(

)

# 在部署过程中,最好使用官方推荐的方式来配置proxy sql

- 启动服务并查看

[root@mysql-proxy ~]# /etc/init.d/proxysql start

Starting ProxySQL: DONE!

[root@mysql-proxy ~]# ss -lntup|grep proxy

tcp LISTEN 0 128 *:6032 *:* users:(("proxysql",pid=2943,fd=24))

tcp LISTEN 0 128 *:6033 *:* users:(("proxysql",pid=2943,fd=22))

tcp LISTEN 0 128 *:6033 *:* users:(("proxysql",pid=2943,fd=21))

tcp LISTEN 0 128 *:6033 *:* users:(("proxysql",pid=2943,fd=20))

tcp LISTEN 0 128 *:6033 *:* users:(("proxysql",pid=2943,fd=19))

- 先初始化Proxysql,将之前的proxysql数据都删除

[root@mysql-proxy ~]# mysql -uadmin -padmin -h127.0.0.1 -P6032 Welcome to the MariaDB monitor. Commands end with ; or \g. Your MySQL connection id is 1 Server version: 5.5.30 (ProxySQL Admin Module) Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others. Type 'help;' or '\h' for help. Type '\c' to clear the current input statement. MySQL [(none)]> show tables; +--------------------------------------------+ | tables | +--------------------------------------------+ | global_variables | | mysql_collations | | mysql_group_replication_hostgroups | | mysql_query_rules | | mysql_query_rules_fast_routing | | mysql_replication_hostgroups | | mysql_servers | | mysql_users | | proxysql_servers | | runtime_checksums_values | | runtime_global_variables | | runtime_mysql_group_replication_hostgroups | | runtime_mysql_query_rules | | runtime_mysql_query_rules_fast_routing | | runtime_mysql_replication_hostgroups | | runtime_mysql_servers | | runtime_mysql_users | | runtime_proxysql_servers | | runtime_scheduler | | scheduler | +--------------------------------------------+ 20 rows in set (0.001 sec) MySQL [(none)]> delete from scheduler ; Query OK, 0 rows affected (0.000 sec) MySQL [(none)]> delete from mysql_servers; Query OK, 3 rows affected (0.000 sec) MySQL [(none)]> delete from mysql_users; Query OK, 1 row affected (0.000 sec) MySQL [(none)]> delete from mysql_query_rules; Query OK, 0 rows affected (0.000 sec) MySQL [(none)]> delete from mysql_group_replication_hostgroups ; Query OK, 1 row affected (0.000 sec) MySQL [(none)]> LOAD MYSQL VARIABLES TO RUNTIME; Query OK, 0 rows affected (0.000 sec) MySQL [(none)]> SAVE MYSQL VARIABLES TO DISK; Query OK, 94 rows affected (0.175 sec) MySQL [(none)]> LOAD MYSQL SERVERS TO RUNTIME; Query OK, 0 rows affected (0.003 sec) MySQL [(none)]> SAVE MYSQL SERVERS TO DISK; Query OK, 0 rows affected (0.140 sec) MySQL [(none)]> LOAD MYSQL USERS TO RUNTIME; Query OK, 0 rows affected (0.000 sec) MySQL [(none)]> SAVE MYSQL USERS TO DISK; Query OK, 0 rows affected (0.050 sec) MySQL [(none)]> LOAD SCHEDULER TO RUNTIME; Query OK, 0 rows affected (0.000 sec) MySQL [(none)]> SAVE SCHEDULER TO DISK; Query OK, 0 rows affected (0.096 sec) MySQL [(none)]> LOAD MYSQL QUERY RULES TO RUNTIME; Query OK, 0 rows affected (0.000 sec) MySQL [(none)]> SAVE MYSQL QUERY RULES TO DISK; Query OK, 0 rows affected (0.156 sec) MySQL [(none)]>

- 增加proxysql所需要的视图以及存储过程,创建系统视图 (sys.gr_member_routing_candidate_status)

在MGR-node1节点上,创建系统视图sys.gr_member_routing_candidate_status,该视图将为ProxySQL提供组复制相关的监控状态指标。 下载addition_to_sys.sql脚本,在MGR-node1节点执行如下语句导入MySQL即可 (在mgr-node1节点的mysql执行后,会同步到其他两个节点上)。 下载地址: https://pan.baidu.com/s/1bNYHtExy2fmqwvEyQS3sWg 提取密码:wst7 [root@MGR-node1 ~]# ll /root/addition_to_sys.sql -rwxr-xr-x 1 root root 2647 Jan 24 17:14 /root/addition_to_sys.sql 导入sql文件 [root@MGR-node1 ~]# mysql -p123456 < /root/addition_to_sys.sql mysql: [Warning] Using a password on the command line interface can be insecure. 在三个mysql节点上可以查看该视图: mysql> select * from sys.gr_member_routing_candidate_status; +------------------+-----------+---------------------+----------------------+ | viable_candidate | read_only | transactions_behind | transactions_to_cert | +------------------+-----------+---------------------+----------------------+ | YES | NO | 0 | 0 | +------------------+-----------+---------------------+----------------------+ 1 row in set (0.01 sec)

- 在mysql-master主数据库节点上执行:(只需master执行即可,会复制给slave从数据库) (即创建代理端口连接后端真实数据库的用户名和密码)

mysql> GRANT ALL ON *.* TO 'proxysql'@'172.16.60.%' IDENTIFIED BY 'proxysql'; Query OK, 0 rows affected, 1 warning (0.03 sec) mysql> flush privileges; Query OK, 0 rows affected (0.04 sec)

- proxysql增加帐号

MySQL [(none)]> INSERT INTO MySQL_users(username,password,default_hostgroup) VALUES ('proxysql','proxysql',2);

Query OK, 1 row affected (0.000 sec)

MySQL [(none)]> UPDATE global_variables SET variable_value='proxysql' where variable_name='mysql-monitor_username';

Query OK, 1 row affected (0.002 sec)

MySQL [(none)]> UPDATE global_variables SET variable_value='proxysql' where variable_name='mysql-monitor_password';

Query OK, 1 row affected (0.002 sec)

- mysql_servers插入数据 (下面插入命令中的ip也可以换成各个节点的主机名,其他是可自都做了hosts主机名绑定)

MySQL [(none)]> insert into mysql_servers (hostgroup_id, hostname, port) values(2,'172.16.60.211',3306); Query OK, 1 row affected (0.001 sec) MySQL [(none)]> insert into mysql_servers (hostgroup_id, hostname, port) values(2,'172.16.60.212',3306); Query OK, 1 row affected (0.000 sec) MySQL [(none)]> insert into mysql_servers (hostgroup_id, hostname, port) values(2,'172.16.60.213',3306); Query OK, 1 row affected (0.000 sec) MySQL [(none)]> select * from mysql_servers; +--------------+---------------+------+--------+--------+-------------+-----------------+---------------------+---------+----------------+---------+ | hostgroup_id | hostname | port | status | weight | compression | max_connections | max_replication_lag | use_ssl | max_latency_ms | comment | +--------------+---------------+------+--------+--------+-------------+-----------------+---------------------+---------+----------------+---------+ | 2 | 172.16.60.211 | 3306 | ONLINE | 1 | 0 | 1000 | 0 | 0 | 0 | | | 2 | 172.16.60.212 | 3306 | ONLINE | 1 | 0 | 1000 | 0 | 0 | 0 | | | 2 | 172.16.60.213 | 3306 | ONLINE | 1 | 0 | 1000 | 0 | 0 | 0 | | +--------------+---------------+------+--------+--------+-------------+-----------------+---------------------+---------+----------------+---------+ 3 rows in set (0.000 sec)

- 将MGR集群的分组定义和关键参数写入mysql_group_replication_hostgroups

如下,执行插入命令 "insert into mysql_group_replication_hostgroups (writer_hostgroup,backup_writer_hostgroup,reader_hostgroup, offline_hostgroup,active,max_writers,writer_is_also_reader,max_transactions_behind) values (2,4,3,1,1,1,0,100);"

MySQL [(none)]> insert into mysql_group_replication_hostgroups (writer_hostgroup,backup_writer_hostgroup,reader_hostgroup, offline_hostgroup,active,max_writers,writer_is_also_reader,max_transactions_behind) values (2,4,3,1,1,1,0,100); Query OK, 1 row affected (0.000 sec)

- 将上面对proxysql所有的变更都加载到环境中

MySQL [(none)]> LOAD MYSQL VARIABLES TO RUNTIME; Query OK, 0 rows affected (0.001 sec) MySQL [(none)]> SAVE MYSQL VARIABLES TO DISK; Query OK, 94 rows affected (0.071 sec) MySQL [(none)]> LOAD MYSQL SERVERS TO RUNTIME; LOAD MYSQL USERS TO RUNTIME; Query OK, 0 rows affected (0.005 sec) MySQL [(none)]> SAVE MYSQL SERVERS TO DISK; SAVE MYSQL USERS TO DISK; Query OK, 0 rows affected (0.260 sec) MySQL [(none)]> LOAD MYSQL USERS TO RUNTIME; Query OK, 0 rows affected (0.000 sec) MySQL [(none)]> SAVE MYSQL USERS TO DISK; Query OK, 0 rows affected (0.101 sec)

- 接下来检查下ProxySQL是如何将MGR节点分发到ProxySQL各个组中

表名前面多了一个runtime前缀,group2为writer group,group4为backup_writer_group, 如下说明172.16.60.213为当前Proxysql连接的节点,其它两个为备用写节点:

MySQL [(none)]> select hostgroup_id, hostname, status from runtime_mysql_servers; +--------------+---------------+--------+ | hostgroup_id | hostname | status | +--------------+---------------+--------+ | 2 | 172.16.60.213 | ONLINE | | 4 | 172.16.60.212 | ONLINE | | 4 | 172.16.60.211 | ONLINE | +--------------+---------------+--------+ 3 rows in set (0.003 sec)

- 如果当前Proxysql连接的节点172.16.60.213出现故障,测试看能否切换到其它节点

如果当前Proxysql连接的节点172.16.60.213出现故障,测试看能否切换到其它节点; 然后再看下172.16.60.213节点的故障恢复后的情况:

将172.16.60.213节点设为read only [root@MGR-node3 ~]# mysql -p123456 .......... mysql> set global read_only=1; Query OK, 0 rows affected (0.00 sec) 再看一下runtime_mysql_servers的状态 [root@mysql-proxy ~]# mysql -uadmin -padmin -h127.0.0.1 -P6032 .......... MySQL [(none)]> select hostgroup_id, hostname, status from runtime_mysql_servers; +--------------+---------------+--------+ | hostgroup_id | hostname | status | +--------------+---------------+--------+ | 2 | 172.16.60.212 | ONLINE | | 3 | 172.16.60.213 | ONLINE | | 4 | 172.16.60.211 | ONLINE | +--------------+---------------+--------+ 3 rows in set (0.003 sec) 发现已经将172.16.60.213节点设为group3了,group3为reader_group。 现在可写的节点即当前Proxysql连接的节点改成了172.16.60.212了。 将172.16.60.213节点恢复为可写模式 [root@MGR-node3 ~]# mysql -p123456 .......... mysql> set global read_only=0; Query OK, 0 rows affected (0.00 sec) 发现runtime_mysql_servers也恢复了过来,即172.16.60.213节点又恢复到了写节点,其他两个都是备写节点 [root@mysql-proxy ~]# mysql -uadmin -padmin -h127.0.0.1 -P6032 .......... MySQL [(none)]> select hostgroup_id, hostname, status from runtime_mysql_servers; +--------------+---------------+--------+ | hostgroup_id | hostname | status | +--------------+---------------+--------+ | 2 | 172.16.60.213 | ONLINE | | 4 | 172.16.60.212 | ONLINE | | 4 | 172.16.60.211 | ONLINE | +--------------+---------------+--------+ 3 rows in set (0.003 sec)

- 数据读写操作的分配情况

登录proxysql的代理端口,连接到代理后端的真实数据库上进行数据更新操作 [root@mysql-proxy ~]# mysql -uproxysql -pproxysql -h 127.0.0.1 -P 6033 ........... ........... MySQL [(none)]> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | kevin | | mysql | | performance_schema | | sys | +--------------------+ 5 rows in set (0.001 sec) MySQL [(none)]> select * from kevin.haha; +----+-----------+ | id | name | +----+-----------+ | 1 | wangshibo | | 2 | guohuihui | | 11 | beijing | | 12 | shanghai | +----+-----------+ 4 rows in set (0.001 sec) MySQL [(none)]> delete from kevin.haha where id>11; Query OK, 1 row affected (0.086 sec) MySQL [(none)]> update kevin.haha set id=100 where name="beijing"; Query OK, 1 row affected (0.072 sec) Rows matched: 1 Changed: 1 Warnings: 0 MySQL [(none)]> select * from kevin.haha; +-----+-----------+ | id | name | +-----+-----------+ | 1 | wangshibo | | 2 | guohuihui | | 100 | beijing | +-----+-----------+ 3 rows in set (0.001 sec) 登录proxysql的管理端口,查看数据读写分配情况 [root@mysql-proxy ~]# mysql -uadmin -padmin -h127.0.0.1 -P6032 .......... .......... MySQL [(none)]> select * from stats_mysql_query_digest; +-----------+--------------------+----------+--------------------+------------------------------------------------------------------+------------+------------+------------+----------+----------+----------+ | hostgroup | schemaname | username | digest | digest_text | count_star | first_seen | last_seen | sum_time | min_time | max_time | +-----------+--------------------+----------+--------------------+------------------------------------------------------------------+------------+------------+------------+----------+----------+----------+ | 2 | information_schema | proxysql | 0x95C1EAD704156371 | delete from kevin.haha where id>? | 2 | 1548400603 | 1548644794 | 262487 | 85409 | 177078 | | 2 | information_schema | proxysql | 0xA307FE1E53D63E71 | select hostgroup_id, hostname, status from runtime_mysql_servers | 2 | 1548400698 | 1548644762 | 1222 | 508 | 714 | | 2 | information_schema | proxysql | 0x8E490D39ED5CA9E2 | delete from kevin.haha id>? | 2 | 1548400598 | 1548644787 | 626 | 299 | 327 | | 2 | information_schema | proxysql | 0xB23E08156F2F7F49 | SELECT * FROM performance_schema.replication_group_members | 2 | 1548399582 | 1548399585 | 8102940 | 1037 | 8101903 | | 2 | information_schema | proxysql | 0xDFD4E74AA19CD894 | update kevin.haha set id=? where name=? | 3 | 1548399161 | 1548644931 | 251211 | 62493 | 116731 | | 2 | information_schema | proxysql | 0xF6FA5DFBB674D5FF | delete from kevin.haha where id > ? | 1 | 1548399125 | 1548399125 | 116382 | 116382 | 116382 | | 2 | information_schema | proxysql | 0xD8AAAE77FA99AC44 | select * from kevin.haha | 10 | 1548399099 | 1548644933 | 4984 | 323 | 948 | | 2 | information_schema | proxysql | 0x9316817E8C74BCB2 | insert into kevin.haha values(?,?),(?,?),(?,?) | 1 | 1548399128 | 1548399128 | 73118 | 73118 | 73118 | | 2 | information_schema | proxysql | 0x02033E45904D3DF0 | show databases | 4 | 1548398871 | 1548644766 | 4122 | 566 | 2132 | | 2 | information_schema | proxysql | 0x226CD90D52A2BA0B | select @@version_comment limit ? | 10 | 1548398868 | 1548644760 | 0 | 0 | 0 | +-----------+--------------------+----------+--------------------+------------------------------------------------------------------+------------+------------+------------+----------+----------+----------+ 10 rows in set (0.002 sec) 发现数据的读写操作都被分配到group2组内,即读写操作走的都是group2组内的172.16.60.213节点。 ============================================================= 现在将172.16.60.213模拟为故障状态 [root@MGR-node3 ~]# mysql -p123456 ........... mysql> set global read_only=1; Query OK, 0 rows affected (0.00 sec) 登录proxysql的管理端口 [root@mysql-proxy ~]# mysql -uadmin -padmin -h127.0.0.1 -P6032 ........... MySQL [(none)]> select hostgroup_id, hostname, status from runtime_mysql_servers; +--------------+---------------+--------+ | hostgroup_id | hostname | status | +--------------+---------------+--------+ | 2 | 172.16.60.212 | ONLINE | | 3 | 172.16.60.213 | ONLINE | | 4 | 172.16.60.211 | ONLINE | +--------------+---------------+--------+ 3 rows in set (0.001 sec) 发现此时的写节点更换为172.16.60.212了,172.16.60.213节点分配到group3 (只读组) 登录proxysql的代理端口,连接到代理后端的真实数据库上进行数据更新操作 [root@mysql-proxy ~]# mysql -uproxysql -pproxysql -h 127.0.0.1 -P 6033 ............ ............ MySQL [(none)]> create database shibo; Query OK, 1 row affected (0.036 sec) MySQL [(none)]> drop database shibo; Query OK, 0 rows affected (0.031 sec) MySQL [information_schema]> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | kevin | | mysql | | performance_schema | | sys | +--------------------+ 5 rows in set (0.001 sec) MySQL [information_schema]> delete from kevin.haha where id>1; Query OK, 5 rows affected (0.098 sec) MySQL [information_schema]> select * from kevin.haha; +----+-----------+ | id | name | +----+-----------+ | 1 | wangshibo | +----+-----------+ 1 row in set (0.001 sec) 登录proxysql的管理端口,查看数据读写分配情况 MySQL [(none)]> select * from stats_mysql_query_digest; +-----------+--------------------+----------+--------------------+------------------------------------------------------------------+------------+------------+------------+----------+----------+----------+ | hostgroup | schemaname | username | digest | digest_text | count_star | first_seen | last_seen | sum_time | min_time | max_time | +-----------+--------------------+----------+--------------------+------------------------------------------------------------------+------------+------------+------------+----------+----------+----------+ | 2 | information_schema | proxysql | 0x58710084C1F7DFA7 | drop database shibo | 1 | 1548645734 | 1548645734 | 31097 | 31097 | 31097 | | 2 | information_schema | proxysql | 0xD880C8B266964B45 | create database shibo | 1 | 1548645692 | 1548645692 | 36262 | 36262 | 36262 | | 2 | information_schema | proxysql | 0x3EA85877510AC608 | select * from stats_mysql_query_digest | 1 | 1548645635 | 1548645635 | 380 | 380 | 380 | | 2 | information_schema | proxysql | 0x226CD90D52A2BA0B | select @@version_comment limit ? | 13 | 1548398867 | 1548645681 | 0 | 0 | 0 | | 2 | information_schema | proxysql | 0x02033E45904D3DF0 | show databases | 5 | 1548398870 | 1548645741 | 4729 | 566 | 2132 | | 2 | information_schema | proxysql | 0x9316817E8C74BCB2 | insert into kevin.haha values(?,?),(?,?),(?,?) | 2 | 1548399127 | 1548645427 | 182202 | 73118 | 109084 | | 2 | information_schema | proxysql | 0xD8AAAE77FA99AC44 | select * from kevin.haha | 15 | 1548399098 | 1548645796 | 7915 | 323 | 1200 | | 2 | information_schema | proxysql | 0xF6FA5DFBB674D5FF | delete from kevin.haha where id > ? | 1 | 1548399124 | 1548399124 | 116382 | 116382 | 116382 | | 2 | information_schema | proxysql | 0x620B328FE9D6D71A | SELECT DATABASE() | 1 | 1548645734 | 1548645734 | 288 | 288 | 288 | | 2 | information_schema | proxysql | 0xDFD4E74AA19CD894 | update kevin.haha set id=? where name=? | 3 | 1548399160 | 1548644930 | 251211 | 62493 | 116731 | | 2 | information_schema | proxysql | 0xB23E08156F2F7F49 | SELECT * FROM performance_schema.replication_group_members | 2 | 1548399581 | 1548399584 | 8102940 | 1037 | 8101903 | | 2 | information_schema | proxysql | 0xA307FE1E53D63E71 | select hostgroup_id, hostname, status from runtime_mysql_servers | 2 | 1548400697 | 1548644761 | 1222 | 508 | 714 | | 2 | information_schema | proxysql | 0x8E490D39ED5CA9E2 | delete from kevin.haha id>? | 2 | 1548400597 | 1548644786 | 626 | 299 | 327 | | 2 | information_schema | proxysql | 0x95C1EAD704156371 | delete from kevin.haha where id>? | 3 | 1548400602 | 1548645795 | 360087 | 85409 | 177078 | +-----------+--------------------+----------+--------------------+------------------------------------------------------------------+------------+------------+------------+----------+----------+----------+ 14 rows in set (0.001 sec) 恢复172.16.60.213的写 mysql> set global read_only=0; Query OK, 0 rows affected (0.00 s属性ec) 登录proxysql的管理端口 [root@mysql-proxy ~]# mysql -uadmin -padmin -h127.0.0.1 -P6032 ......... MySQL [(none)]> select hostgroup_id, hostname, status from runtime_mysql_servers; +--------------+---------------+--------+ | hostgroup_id | hostname | status | +--------------+---------------+--------+ | 2 | 172.16.60.213 | ONLINE | | 4 | 172.16.60.212 | ONLINE | | 4 | 172.16.60.211 | ONLINE | +--------------+---------------+--------+ 3 rows in set (0.003 sec)

这里说明几点

- MGR多写模式下如上配置后,读写操作始终是写到当前的group2写组内的节点。

- 即使是某一个节点设置为read only (分配到group3内),mysql读写操作也只会通过proxysql始终代理到group2组内的节点上。

- 本案例只是可以实现MGR组复制的高可用,数据并没有进行读写分离。要想实现读写分离,需另行配置路由转发规则。

- 本案例实现了proxysql代理后的MGR的高可用,但是proxysql代理层存在单点。线上环境,建议部署proxysql高可用环境。

MGR无感知切换的高可用方案实现方式思考

ProxySQL+MGR部署的两种模型:

1) 靠近应用端

方式: 在应用服务器上直接部署

优点:

- 每个应用服务器有自己的配置 ,配置内容简单,不容易相互影响故障,变更故障风险最小

- 没有瓶颈压力,故障容错最好,单机故障影响最小

- 数据库上可以清楚看到SQL来自哪台应用机器,方便排查故障

- 无需单独机器资源

缺点:

- 每台应用服务器上都需要配置,当数据库架构扩容或者其他变动时,需要应用则的ProxySQL做相应改动

- 当一台应用上需要连接多套数据库时,配置也会开始稍微复杂

2) 靠近数据库端

方式: 通过独立的ProxySQL集群来提供服务

优点:

- 不需要每台应用服务器上配置,集中修改

缺点:

- 容易出现瓶颈,网络、机器性能等

- 集中配置,导致配置非常复杂,可能相互影响,变更故障风险高

- 全部应用通过ProxySQL来连接,数据库上看到具体问题连接来自哪台应用机器,无法进行故障定位

- 需要单独的机器资源来部署ProxySQL,因为流量集中且是应用层,需要考虑性能瓶颈,占用机器资源相对较多

- 机器故障时,影响是面级,通过额外高可用技术来减少影响

综合上面的优缺点来说,使用ProxySQL+MGR来实现应用切换无感知方案其实并不大合适,Haproxy等方案也有类似问题。

所以建议采用下面方案来实现MGR无感知切换:

- 使用浮动IP来实现Mysql MGR的写高可用(浮动IP需要自己实现)。

- 使用LVS来实现Mysql MGR或者普通复制(扩展库)读库的高可用和负载均衡

- 使用DNS域名切换来实现不同机房的切换

在实际线上业务中,MGR无感知故障切换的高可用可以使用的具体方案 (比如三个写节点的MGR):

1)可以利用Keepalived,配置三个VIP,三个MGR节点之间实现相互"两两主从"关系,具体的keepalive的配置可参考: https://www.cnblogs.com/kevingrace/p/6248941.html (文章最后提高了3个VIP的配置)。 keepalived配置中监控mysql服务,当mysql服务挂掉时,实现vip漂移。然后对外提供一个域名 (最好是内网域名), 该域名解析指向这三个VIP地址。

2)在MGR的上层配置ProxySQL代理,通过ProxySQL实现负载均衡和读写分离,然后配置Keepaliced,通过浮动VIP实现ProxySQL的无感知切换。同样,Keepalived配置中监控ProxySQL服务,当ProxySQL服务挂掉时,实现VIP漂移。

目录 返回

首页